Make your debut as a rock star of business.

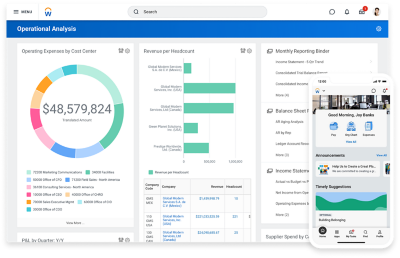

Only Workday unites finance and HR on one platform where AI and continuous innovation are built into the core. It’s how we help customers empower their people, make fast, confident decisions, and drive flawless operations to move business forever forward.

Best-in-class applications for finance, HR, and more.

Move forward faster with collaborative, continuous planning.

Embedded AI for maximum performance.

WHO SUCCEEDS WITH US

Real rock stars of business. Epic results.

Reinvigorated skills tracking with machine learning.

Makes better workforce decisions with full insight.

Achieved single view across finance and HCM.

Increased career advancement opportunities.

Increased growth and efficiency with actionable data.

WHAT WE DO

We help you solve your greatest business challenges.

Be ready for what comes next.

As your business needs change, you need to be able to pivot—fast. Our adaptable architecture helps you do just that.

Empower decisions at every level.

With one source for financial, people, and operational data, everyone can access real-time insights to make sound decisions.

A technology foundation you can trust.

We never stop innovating. And you can count on us to deliver technology that fuels your growth and keeps your data safe.

Amplify your team’s performance with AI.

Organizations that use AI to map the skills of their workforce will be positioned to identify and train their employees for the jobs of tomorrow. The future of work is here—and Workday is leading the way with ethical AI that puts people first.

WHY PARTNER WITH US

A proven leader in finance and HR—time and time again.

A Leader in 2023 Gartner® Magic Quadrant™ for Cloud ERP for Service-Centric Enterprises

A Leader in 2023 Gartner® Magic Quadrant™ for Cloud HCM Suites

A Leader in the 2023 Gartner® Financial Planning report.

WHAT WE’RE ABOUT

We’re shaking up the world of enterprise software.

We’re doing right by our employees, customers, and community.

We’re building a company that’s one of the best places to work.